“Your Sharpe ratio is too low.” This was the comment I received from a hiring manager at a very large quantitative hedge fund. Followed by, “if you give us the code, we can help you make it better.” This did not sit well with me because I had a very positively skewed return structure. Clearly the hiring manager was not differentiating between positive and negative variance, and also trying to get something for nothing!

My thoughts immediately turned to constructing a more relevant metric than the Sharpe ratio. I reached out to my old professor with an idea of differentiating between the two types of variance, who promptly told me I was describing partial moments and he had been working with them for the last 25 years. When David Nawrocki showed me the partial moment formulas on the blackboard, it all just clicked!

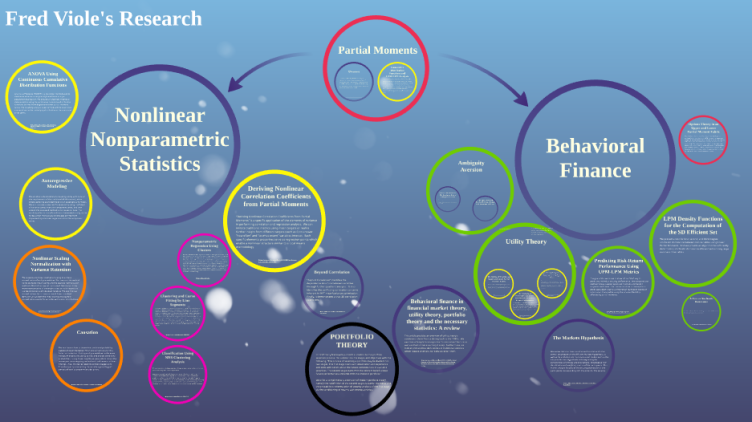

Step 1: See the Partial Moments circle in the research hierarchy for the basic partial moments statistical equivalences that were realized from our initial discussion.

Utility Theory

My idea to replace the Sharpe ratio was initially met with enthusiasm, however, it was quickly pointed out that it lacked utility theory support. So my new risk metric was put on hold while I started reading about utility theory. Upon reading and learning beyond my initial introduction years ago, neither expected utility theory (EUT) nor prospect theory (PT) gave me that “a-ha” moment. Looking at the various utility functions in the literature, the link to partial moments became apparent. Fortunately, there was some existing literature on the use of partial moments in utility theory.

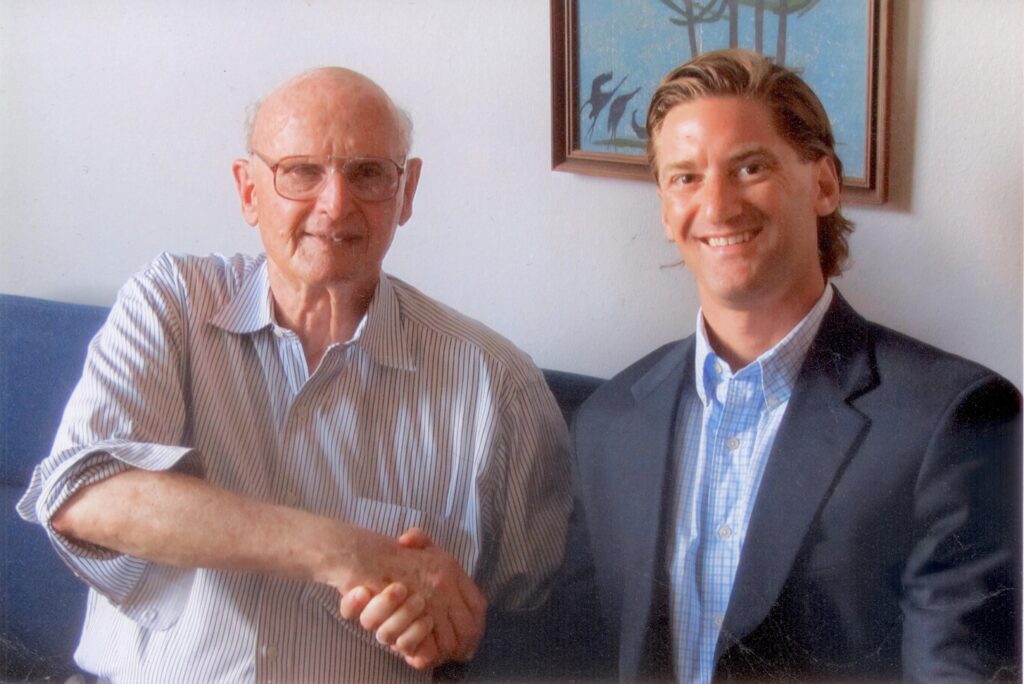

Expanding on the prior works by Fishburn, Kochenberger, and Holthausen; David and I were able to come up with a partial moments equivalence to any known utility function in the literature. We wrote several papers on the topic, expanding the argument normatively and descriptively, to eventually testing it out. It was at this point I took the liberty to send a copy of the work to Nobel laureate Harry Markowitz, as his earlier work on the topic resonated with my underlying portfolio theory intents.

To my utter surprise and delight, Harry responded. This eventually turned into a multi-year correspondence that I am still learning from today. His challenges motivated me, and in this process of civil discourse, I had earned his respect to which he graciously wrote me several letters of recommendation. He also told me I demonstrated a general portfolio theory of which mean-variance is a subset, but I digress!

Risk Metric

Armed with utility theory support, David and I ran numerous tests of the risk metric using partial moments. It worked and worked for all different types of utility functions! With the ubiquity of partial moments in utility theory and this successful Sharpe ratio replacing result, thoughts turned to other utility theory representations or proxies. Stochastic dominance fit the bill, and we eventually showed the partial moment equivalence in a test of stochastic dominance as well as extracting the stochastic dominant efficient set of securities for a given degree.

Step 2: See the Behavioral Finance circle for an exposition of the related utility theory insights.

Co-Partial Moments

The success from the stochastic dominance tests and resulting sets led to thoughts of co-partial moments to effectively describe more than one security or portfolio. After reflecting on how partial moments parse the variance of a single security, the multivariate application became clear.

David had been working with co-lower partial moments as a method of addressing portfolio risk, but a full covariance partial moment representation had not been developed. By parsing the joint distribution of securities, I was able to define the covariance matrix with its corresponding co-partial moments matrices. This definition opened up a whole new world of viewing the relationship between variables.

Non-Linear Statistics

Many classical statistics are based on variance, or the co-variance of variables. I realized I could redefine all of these techniques using the partial moments definition of variance & covariance. When exploring these techniques in more detail and redefining them, the pervasive linear assumption did not sit well (like the Sharpe ratio comment). Upon investigating non-linear relationships where classical methods failed, I noticed some properties of the co-partial moments that seemed interesting and relevant.

The first of these insights led to a new measure of correlation and dependence, which replicates linear instances and also captures nonlinear relationships. From these partitions of the covariance, the nonlinear regression method was born. This was nothing short of an epiphany for me and led to a flurry of statistical insights using partial moments applicable to the nonlinear relationships that take place around us each and every day.

Step 3: See the Nonlinear Nonparametric Statistics circle for an appreciation of what the alternative representation of the covariance matrix enabled.

R-package

This is where another collaborator and huge influence, Hrishikesh Vinod entered. Hrishikesh took interest as he had some work and familiarity with partial moments, and during a career which involved working with both Tukey and Fishburn at Bell Labs, he seemed to be the perfect person to discuss these novel insights with! His suggestion was to put these ideas, which were currently residing in Microsoft Excel, into an R-package. Respecting his eminent qualifications, I immediately began to learn R and code all of these functions.

With the ease of further experimenting the R-package brought, we were able to produce several papers on the nonlinear regression and the partial derivative insights the method generated.

The R-package has grown as I’ve learned more associations these underlying partial moment representations and techniques can be applied to. One particular insight is the ability of the nonlinear regression to provide comparable results to many current machine learning methods!

Step 4: See the growing list of examples using the NNS R-package including: basic statistics, regression, machine learning, time-series forecasting…

OVVO Labs

Please see the following material for an introductory discussion of the topics mentioned, and all of the extensive state-of-the-art research OVVO Labs has at its disposal on our media page.